Fine-Tuning Transformer Models for Abstractive Text Summarization

Explored abstractive text summarization by fine-tuning pre-trained transformer models like T5-Small and BART-Large on the XSUM dataset, evaluated using ROUGE and BERTScore metrics

This project explored the task of abstractive text summarization using the XSUM dataset, a challenging dataset containing 204k entries focused on generating single-sentence summaries. The objective was to evaluate and compare the performance of two pre-trained transformer-based models: T5-Small and BART-Large-XSum.

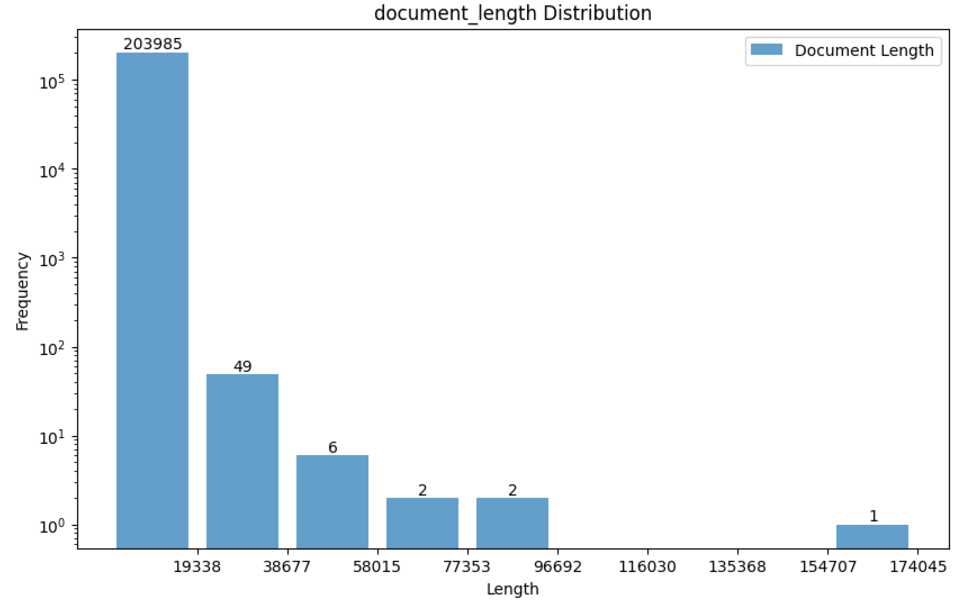

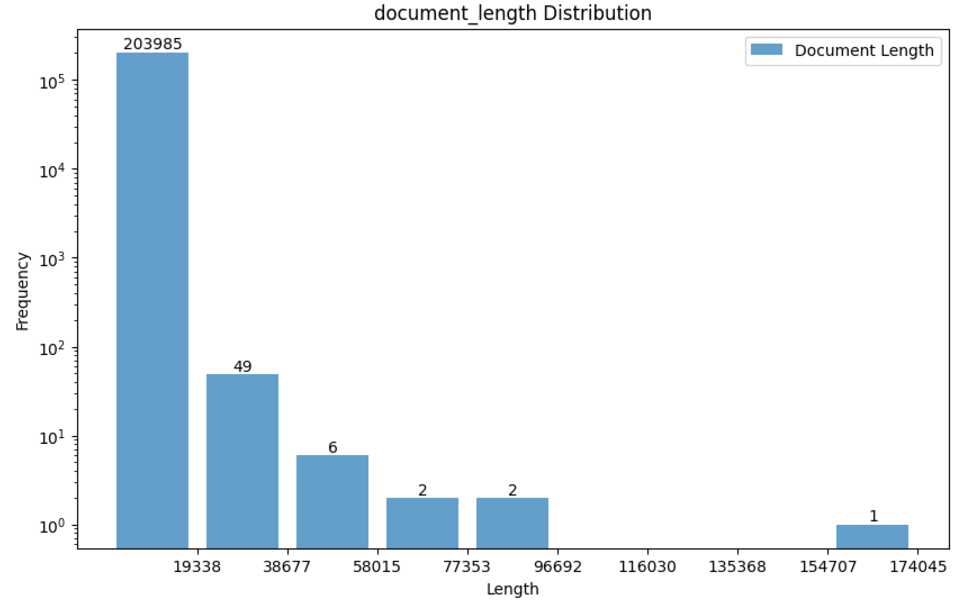

At first, I conducted Exploratory Data Analysis on the dataset to understand its structure, characteristics, and challenges. Insights included variable document lengths (mean: 2,202 characters) and more consistent summary lengths (mean: 125 characters).

I obtained a baseline using the T5-Small model trained on a subset of 10k documents and fine-tuned it further with 20k documents for 10 epochs. The baseline (T5-Small) achieved:

- ROUGE-1: 0.2702 (10k subset) → 0.3200 (20k subset, 10 epochs)

- BERTScore F1: 0.8795

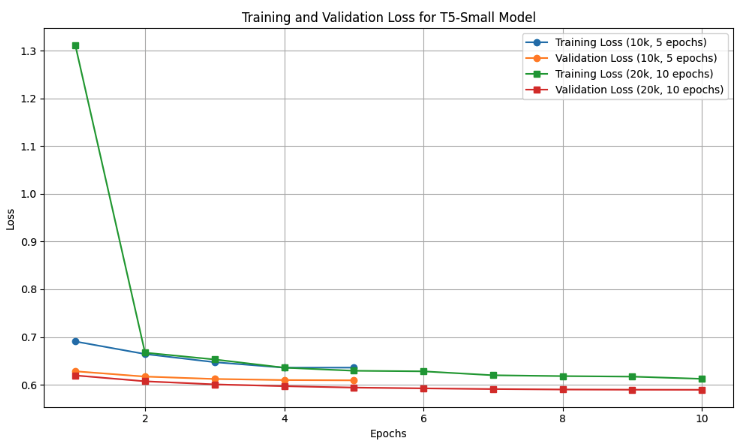

To assess model performance and monitor overfitting, I tracked training and validation loss across epochs for the T5-Small model using datasets of varying sizes (10k and 20k examples). The graph below illustrates how the loss decreases consistently over epochs, indicating effective learning. Notably, the validation loss closely follows the training loss, suggesting good generalization without significant overfitting. This evaluation was instrumental in optimizing training parameters and understanding the model’s learning dynamics.

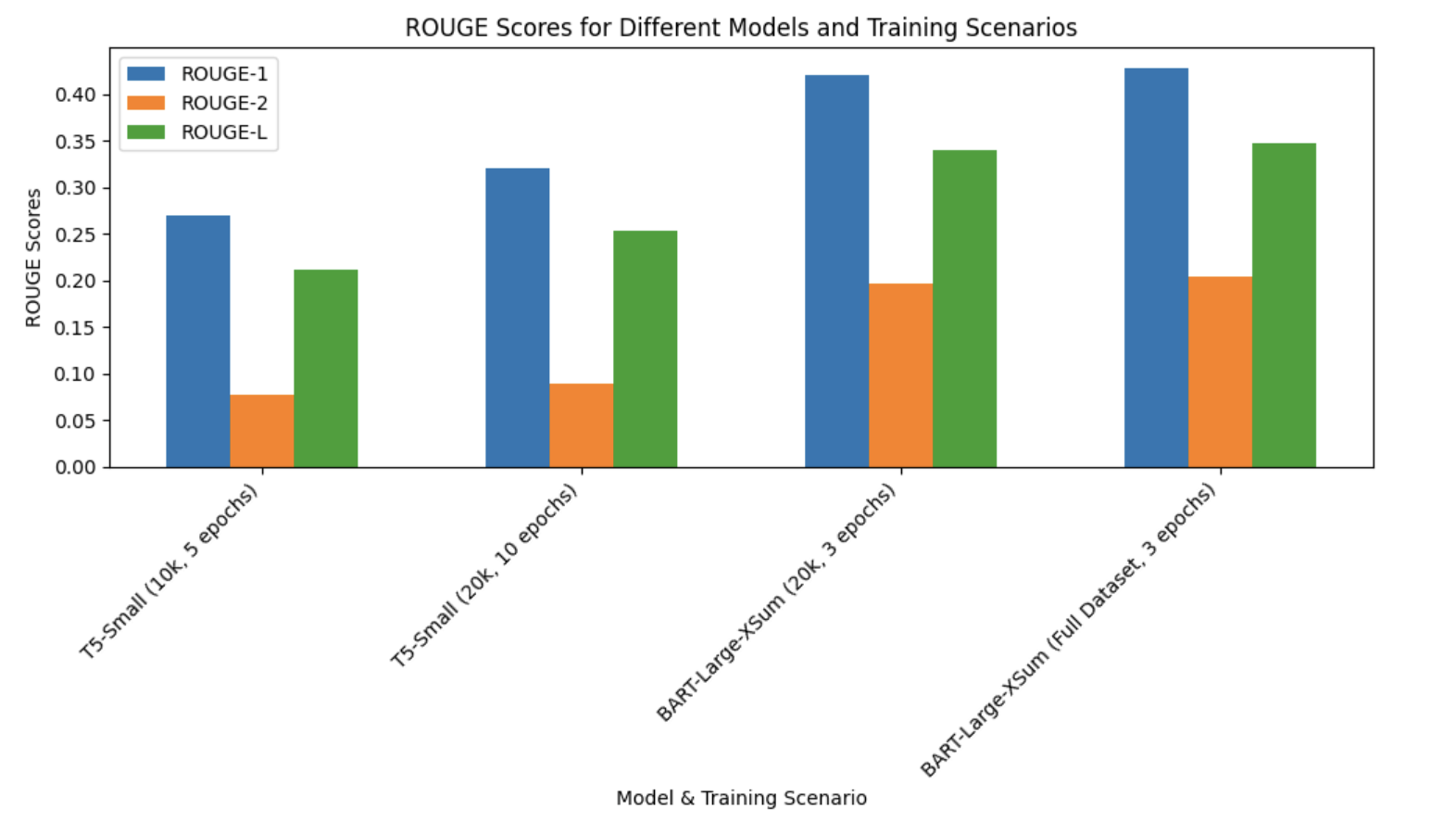

Following the baseline experiments, we conducted final training on the full XSUM dataset using the BART-Large-XSum model, leveraging the computational power of dual NVIDIA A100 GPUs. This training was designed to fully utilize the model’s capacity to handle large-scale data, aiming for optimal abstractive summarization performance. The training achieved notable improvements in ROUGE scores compared to earlier experiments, with a ROUGE-L score of 0.3475 and ROUGE-1 score of 0.4281.

The figure below highlights the comparison of ROUGE scores across different models and training scenarios, showcasing the progression of performance improvements as the dataset size and model capacity increased.

In addition to quantitative evaluation, we performed qualitative analysis by comparing the generated summaries from both T5-Small and BART-Large-XSum models against reference summaries. This analysis revealed that while the BART-Large-XSum model produced more accurate and contextually aligned summaries, occasional inaccuracies and over-generalizations persisted in challenging cases.

The codes, notebooks and final project report containing more detailed explanation can be found in this github repository.